Table of Contents

Ecommerce websites, especially large ecommerce websites, commonly have indexing issues. Either your pages are not all being indexed by search engines or you have far too many indexed pages (typically referred to as “index bloat”). This article addresses the former issue and will help you do the following:

- Determine how well your pages are being indexed by Google

- Diagnose why some of your pages are being excluded

- Fix common SEO issues that lead to pages not being included in Google’s index

Here are the most common reasons why your ecommerce web pages are not being indexed:

- Poor crawl efficiency: Google is crawling tons of unnecessary pages, leading to many pages being undervalued or left undiscovered

- Duplicate content: Many of your product or category pages have near-duplicate content, which leads Google to exclude them from its index

How to Determine if Pages are Not Being Indexed

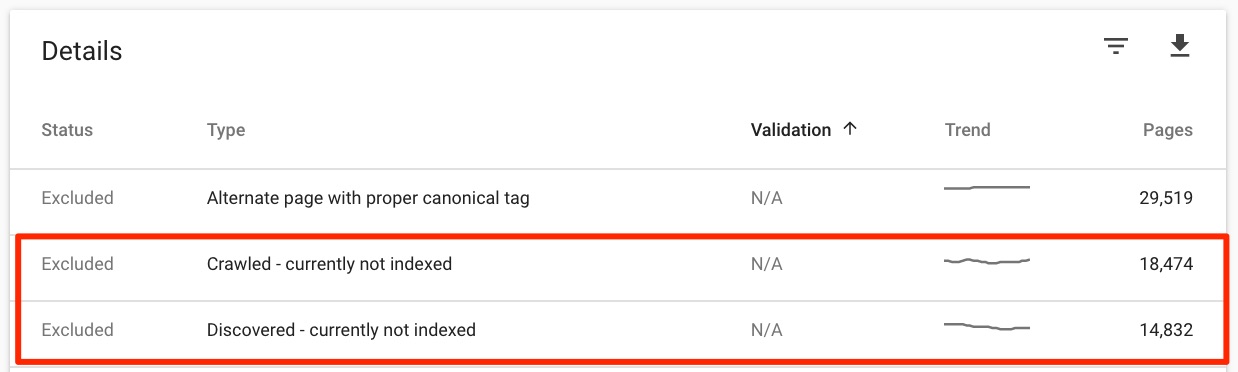

One of our clients is an online retailer in the lighting industry. As you can imagine, they have tens of thousands of products. During our SEO auditing process, we discovered that more than half of their products were not even indexed!

Here’s a step-by-step approach for checking your indexed pages:

- Log into your Google Search Console account

- Navigate to your website’s Coverage reports

- Click to view your “Excluded” pages

- Pay close attention to the following categories of excluded pages:

- Crawled - currently not indexed

- Discovered - currently not indexed

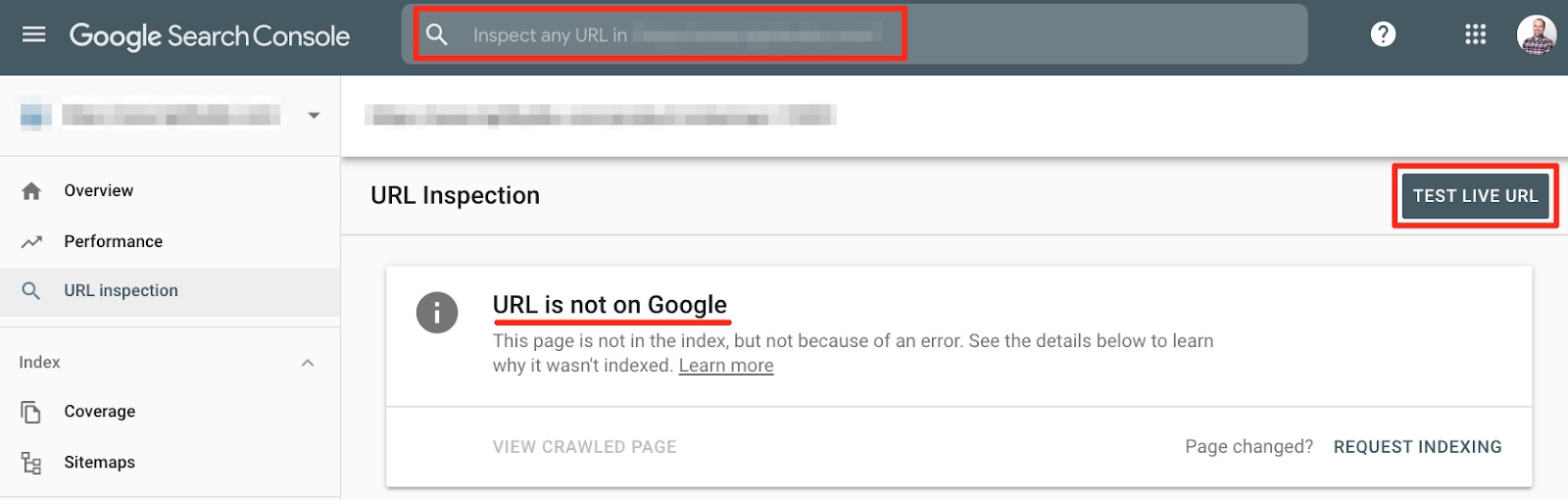

Do you see any product or category pages that are excluded but shouldn’t be? Use GSC’s Inspect feature to spot-check individual URLs to see more details. Click “Test Live URL” to get up-to-date information and view a screenshot of the page.

Fix the Obvious Issues First

If you’ve arrived at this article, you may have already investigated these basic causes of indexing problems. But, it doesn’t hurt to double-confirm before moving on to more advanced issues (which we’ll cover soon).

Check your Robots.txt File and Meta Robots Tags

Are you accidentally blocking access to certain pages via robots.txt file? Or, are you accidentally noindexing pages that should be indexable?

Tip: Run a Screaming Frog crawl of your website with the user-agent set to “Googlebot”. Check the “Indexability” column to make sure that any page that is currently excluded from the index is actually able to be indexed.

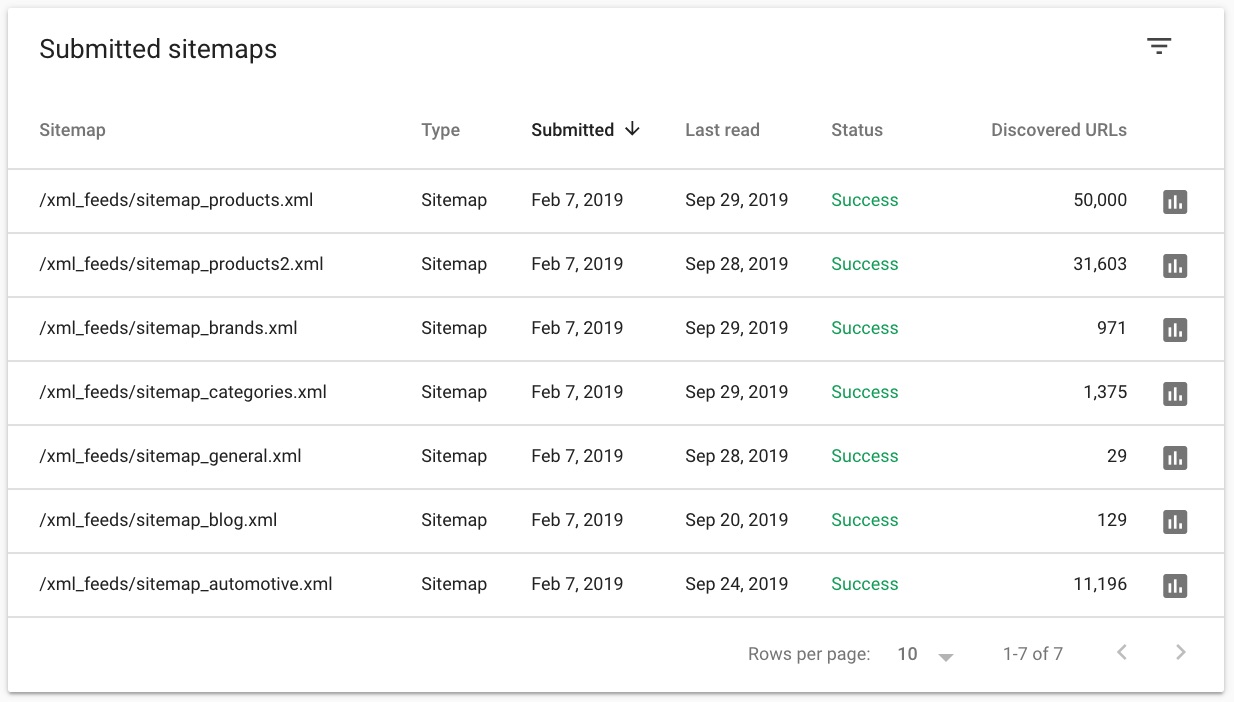

Clean Up and Optimize your XML Sitemaps

Are all of your pages included in your XML sitemap? Is your XML sitemap cluttered with dead or redirected pages? Having a clean XML sitemap can go a long way towards having a better-indexed website.

It’s also recommended that you create individual XML sitemaps for the various sections of your website. For example, your product pages should be in their own XML sitemap, separate from that of your category pages.

Tip: Run a Screaming Frog crawl of XML sitemap(s) by using “List” mode. Check the status code of these URLs to make sure that you only see 200s.

Improve Crawl Efficiency

Ecommerce websites are prone to poor crawl efficiency, which can lead to indexing problems. Not only are ecommerce sites going to have a number of product, category and subcategory pages, but they also have faceted navigations that allow you to sort and filter the product catalog. All of these pages and links can make it more challenging for Google to discover and index your important pages in an efficient manner.

Here are ways you can improve the crawl efficiency of your ecommerce site:

Reduce the Number of Crawlable, Non-Indexable URLs

When we were diagnosing our lighting industry client’s indexing issues, we discovered that Google was crawling more than 15,000 URLs related to one category page! Enterprise ecommerce brands are especially susceptible to index bloat.

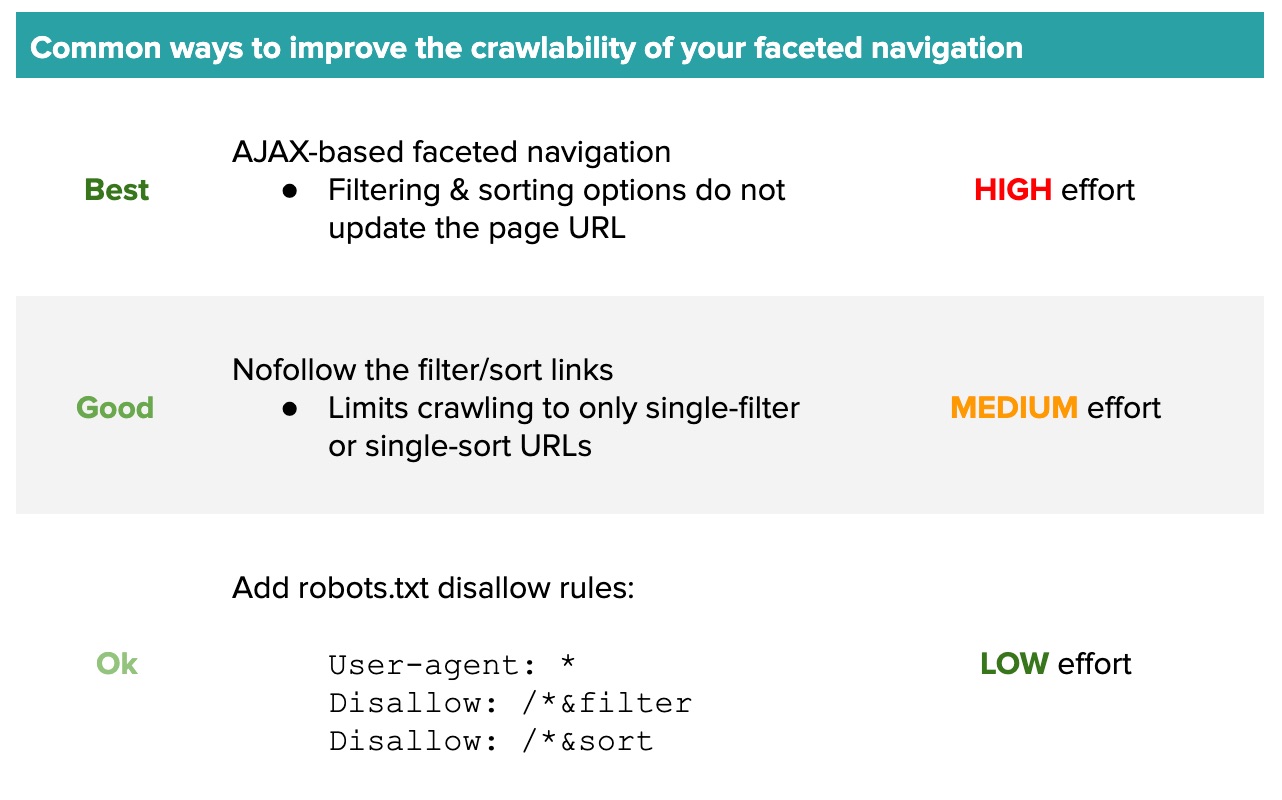

Why so many excess URLs? The faceted navigation on their category pages has multiple links to sort and filter the products. These links append URL parameters to the end of the category page URL (e.g., “?price=high-to-low&size=5”). Google was able to crawl every single combination of these links which exponentially increased the number of crawlable URLs on just one page.

Crawl your website with Screaming Frog and set the user-agent to Googlebot. How many total pages were crawled compared to the number of pages on your website that should be indexed? If you have way too many pages being crawled, then keep an eye out for URLs with parameters being appended to them.

If you’ve identified faceted navigation URLs that are weighing down your crawl efficiency, you have several options for fixing the issue. The right solution will largely depend on how your site is built and the availability of development resources.

Important note: Canonical tags alone are not a solution for improving crawl efficiency. Google can still crawl canonicalized URLs!

Implement Breadcrumb Links

Adding breadcrumb links to your category and product pages can help search engines better understand your site structure and allow for link equity to flow between related pages.

Include breadcrumb schema markup to make it even easier for search engines to assess your site structure.

Use an HTML-Based Pagination Solution

I’ve come across more than a few ecommerce websites with infinite scrolling on their category pages. While this can be a cool experience for users, a solution like this can be difficult for search engines to crawl.

Opt for the traditional pagination links so that users and search engines alike can discover all of your product pages.

Reduce Duplicate Content

Large ecommerce websites often have products that are very similar to one another. These products may only differ by a small specification or one digit in their SKU number. In these cases of near-duplication, Google may choose to not index some of the pages.

Here are a couple of tips you can follow to reduce duplicate content issues and improve the odds that all of your pages will be indexed:

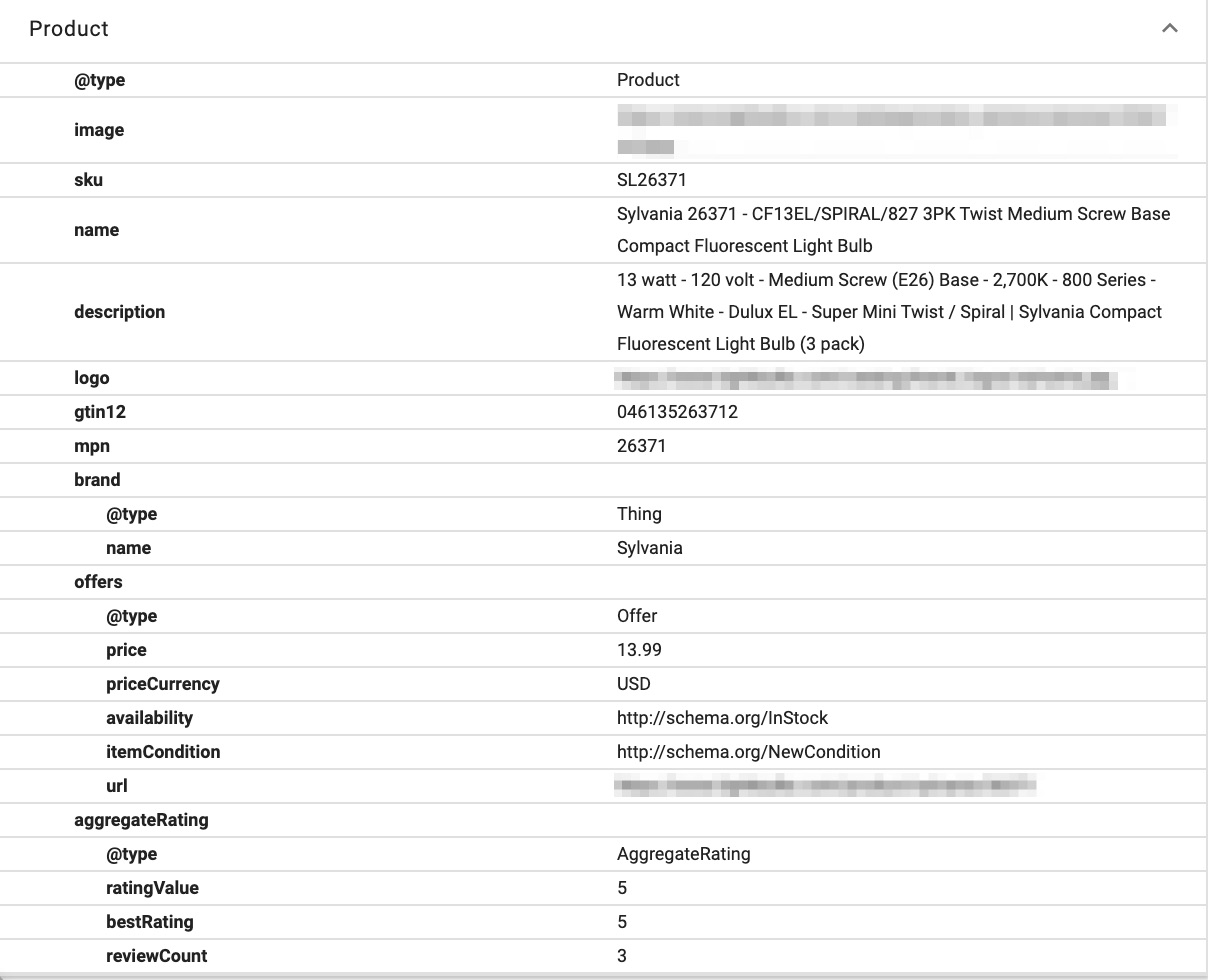

Implement Detailed Product Schema Markup

Go crazy with your product schema markup. Search engines extract information from these attributes and it can weigh into their assessment of the overall uniqueness of a page.

Make Sure your Content is Rendering Properly

Maybe you’re thinking, “I have plenty of pages with unique content that are still not being indexed!” If that’s the case, I recommend that you confirm that search engines are able to render this content.

While search engines claim to be able to index javascript-rendered content, I don’t believe they’re able to do so with the accuracy or consistency of HTML-rendered content. Not sure if your content is being rendered with javascript? Here’s what you can do:

- Download the Chrome extension Quick Javascript Switcher

- Navigate to one of your pages that isn’t being indexed

- Turn off javascript using the extension and see if any content disappears from the page

Pay close attention to your product information and customer reviews. If they do not appear after turning off javascript, then you may need to replace the sections with an HTML-based solution.

Check out our guide on JavaScript SEO for information on how to identify, test and resolve indexing issues.

Final Thoughts

It’s unlikely that there is a single cause contributing to your indexing problems. As you audit your website and identify possible issues, implement fixes one at a time and monitor your Coverage reports in Google Search Console to understand what is or is not working.

If you need help diagnosing and resolving indexing issues, reach out to Uproer’s ecommerce SEO experts at [email protected].